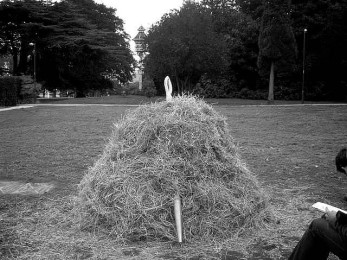

Companies relying on Big Data analytics might be disappointed to discover that they are not so good at finding a needle in a haystack after all.

Companies relying on Big Data analytics might be disappointed to discover that they are not so good at finding a needle in a haystack after all.

Currently the best way to sort large databases of unstructured text is to use a technique called Latent Dirichlet allocation (LDA) which is a modelling technique that identifies text within documents as belonging to a limited number of still-unknown topics.

According to analysis published in the American Physical Society’s journal Physical Review X, LDA had become one of the most common ways to accomplish the computationally difficult problem of classifying specific parts of human language automatically into a context-appropriate category.

According to Luis Amaral, a physicist whose specialty is the mathematical analysis of complex systems and who wrote the paper, LDA is inaccurate.

The team tested LDA-based analysis with repeated analyses of the same set of unstructured data – 23,000 scientific papers and 1.2 million Wikipedia articles written in several different languages.

Not only was LDA inaccurate, its analyses were inconsistent, returning the same results only 80 percent of the time even when using the same data and the same analytic configuration.

Amaral said that accuracy of 90 percent with 80 percent consistency sounds good, but the scores are “actually poor, since they are for an easy case.”

The base of data for which big data is often praised for its ability to manage – the results would be far less accurate and far less reproducible, according to the paper.

The team created an alternative method called TopicMapping, which first breaks words down into bases (treating “stars” and “star” as the same word), then eliminates conjunctions, pronouns and other “stop words” that modify the meaning but not the topic, using a standardized list.

This approach delivered results that were 92 percent accurate and 98 percent reproducible, though, according to the paper, it only moderately improved the likelihood that any given result would be accurate.

The paper’s point was that it was not important to replace LDA with TopicMapping, but to demonstrate that the topic-analysis method that has become one of the most commonly used in big data analysis is far less accurate and far less consistent than previously believed.

While everyone seems to be rushing to get on the cloud, AT&T is downsizing its data centre operations.

While everyone seems to be rushing to get on the cloud, AT&T is downsizing its data centre operations.